6 Signs of Malicious Traffic on Your Web or Mobile App

We sat down with Dan Woods, VP of the Shape Intelligence Center, which is now part of F5 Networks, to talk about how we can best identify malicious traffic on web and mobile applications. This article summarizes six of the primary indicators they look for to determine genuine users vs. imposters.

- Disruption of normal traffic patterns

- Contrived or missing user interactions

- Changes in password key event patterns or frequency

- Highly efficient user interactions

- Spoofed user agent strings

- Spoofed HTTP header data

Additional online traffic during the pandemic has increased cases of fraud and credential stuffing, giving fraudsters more ways to get into your web and mobile platforms. During such an attack, it’s not uncommon for 80-99% of traffic to ultimately be found to be malicious.

In fraud and credential stuffing attacks, it’s not uncommon for 80-99% of traffic to ultimately be found to be malicious.

The high volume and velocity of malicious traffic during such an attack can make it difficult to pinpoint the source and apply countermeasures. Not only are these high-volume attacks damaging to brand reputation and revenue, but they are extremely taxing to backend systems and may even result in extra charges for your fraud prevention tools.

Here are six of the primary indicators to watch for in order to identify malicious traffic on your web or mobile application.

Learn more about Kudelski Security’s penetration testing services for web applications >>

Table of contents

1. Disruption of normal traffic patterns

This is the first indicator that something is amiss on your web or mobile application. Over time, you should notice specific patterns in your traffic. The most common pattern is based on time of day. If your traffic doesn’t follow a diurnal pattern—peaking during daytime hours and falling during nighttime hours—that’s a sign there is some malicious activity happening.

Additionally, if traffic spikes are unrelated to marketing campaigns, such as email campaigns or television ads, that’s another sign your app might be receiving a high volume of malicious traffic.

2. Contrived or missing user interactions

When analyzing in-app interactions like keystrokes and mouse clicks, you’ll find that human interactions tend to be clumsy and inefficient. In an automated interaction, however, you’ll find that multiple interactions happen within milliseconds.

Or, you may find that a user is clicking the same XY coordinate over and over again. That is extremely difficult for a human user to replicate. Even if the attacker started clicking a different XY coordinate each time, any kind of formulaic relationship between the two coordinates is also signal of a synthetic event.

An example of contrived mouse clicks following a formulaic pattern of XY coordinates.

In the case of a malware attack, missing interactions are as much of an indicator as contrived interactions. For example, if you see a user who logs into their frequent flier account and redeems points for a gift card without touching their keyboard, mouse or smartphone screen, that would indicate a malware attack running in the background.

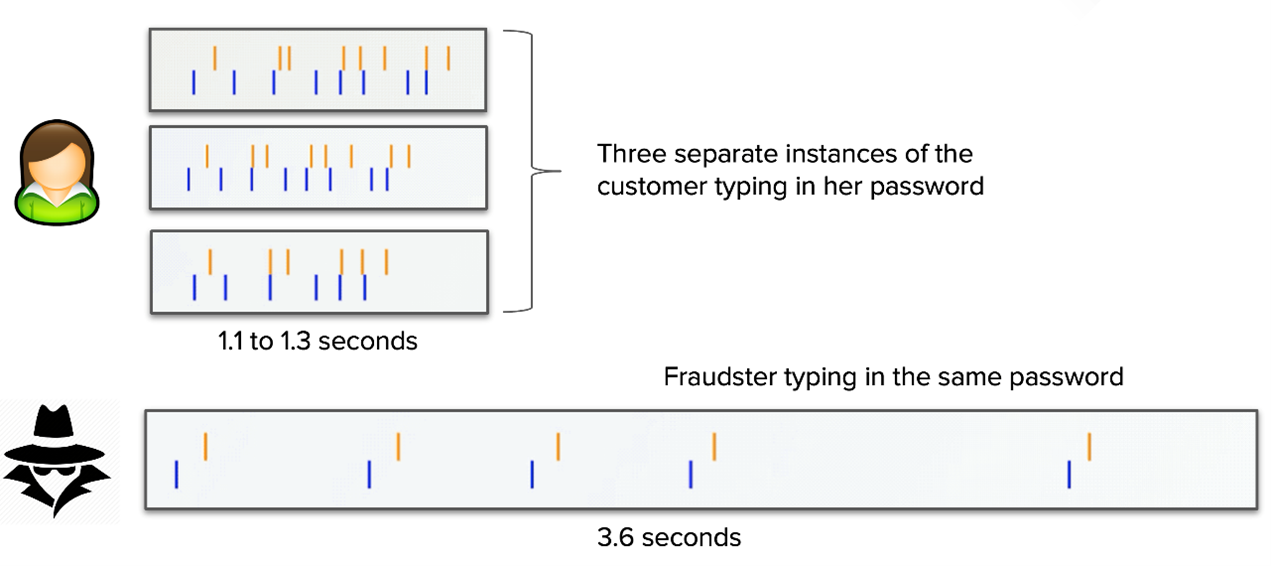

3. Changes in password key event patterns or frequency

A fraudulent login attempt will likely have a much different pattern and frequency than a human attempt. When repeated by a genuine user, password key events should follow roughly the same pattern and timespan. After entering in a password so many times, you develop a rhythm that a fraudster cannot match.

4. Highly efficient user interactions

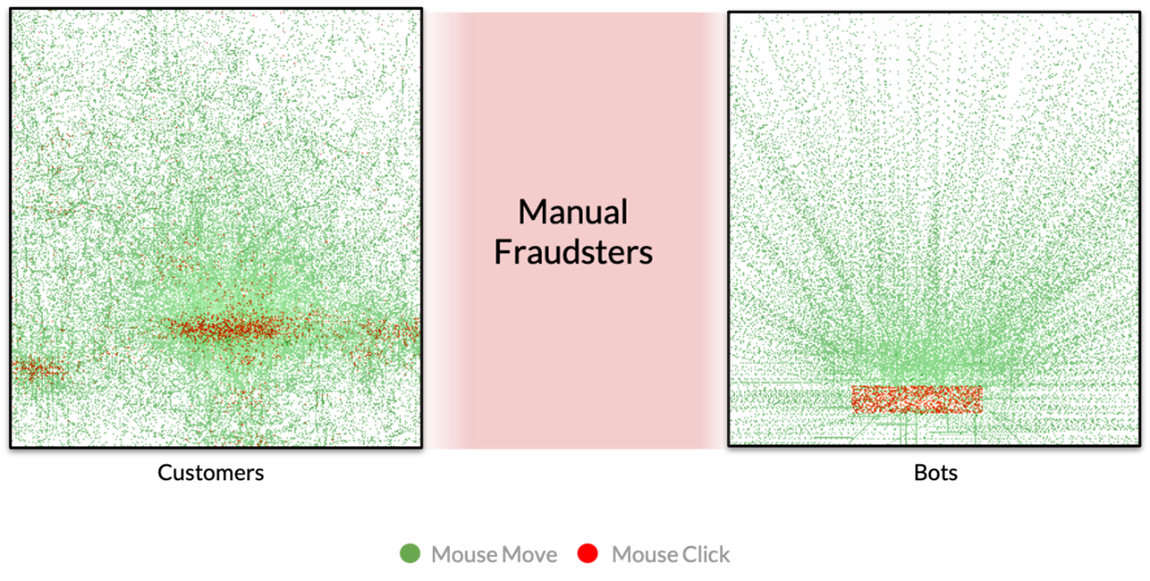

Another way to tell the difference between good traffic and malicious traffic is the efficiency of interactions. Real human interactions are somewhat random. Sometimes a user will click even when there isn’t anything to click. They may be reading the screen, moving the mouse, and clicking just to pass time.

Fraudulent bot interactions, on the other hand, often move in perfectly straight lines. Even if the bot adds entropy, it typically shows up as smooth arcs. And on a login form, for example, a bot has no incentive to click anywhere but the username and password fields and the submit button.

The mouse movements and clicks for genuine users will be much less efficient than a bot or fraudster.

The mouse movements and clicks for genuine users will be much less efficient than a bot or fraudster.Fraudulent manual interactions are right in the middle when it comes to efficiency. They’re going to be more efficient than good human interactions, but not as efficient as bot interactions.

Let’s examine this hypothetical: a user logging into their financial application to add a payee and send a sum of cash. A real user would likely have to search out the “add payee” button because they don’t add a payee every day, which would add a second or two to the workflow. It’s possible an imposter adds payees hundreds of times per day, so they would be able to navigate that workflow extremely quickly.

5. Spoofed user agent strings

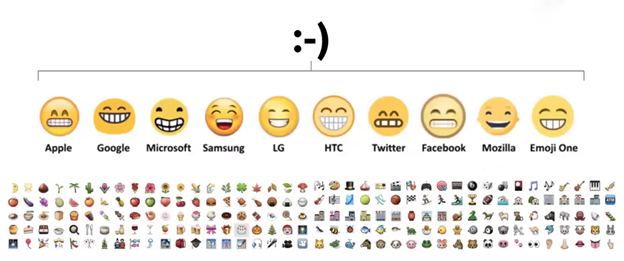

In addition to collecting behavioral biometrics, it’s also important to look at the environment the traffic is coming from. Shape will look at how emojis are rendered to determine whether or not traffic is being spoofed. Emojis will render differently on different platforms and applications.

So if a user agent string purports to be Chrome, but the browser is rendering emojis like Firefox, it’s likely fraudulent traffic. A genuine user would have no incentive to lie about their user agent string.

Similarly, you can look at how really big, hexadecimal numbers convert on each platform. Shape has developed JavaScript code that will ask the browser to convert a hexadecimal to a decimal. Different browsers will provide different answers due to the way they choose to round. The difference is negligible, but enough to help identify which browser the traffic is coming from.

Again, if the user agent string tells us the traffic is coming from Chrome, but they’re converting the hexadecimal like Firefox, then we know it’s fraudulent.

6. Spoofed HTTP header data

Oftentimes, imposters will try to spoof the HTTP header of the website or application, but they will struggle to get everything correct. Therefore, the header can provide a lot of useful signals that the traffic is fraudulent.

Get in Touch

Many of these indicators can’t be uncovered with standard application security monitoring. The addition of a tool like Shape can improve the efficiency and effectiveness of application fraud detection and response by incorporating the following:

- client-side signals

- behavioral biometrics

- network signals

- machine learning and artificial intelligence

To learn more about how to incorporate Shape or other application security tools into your network infrastructure, contact Kudelski Security here.